How a European Corporate Bank achieved perpetual KYC

Challenge

We’ve been working with a customer who year-on-year were struggling to get through their periodic review cycles. Whether done internally or through an outsourced partner, the KYC refresh exercise was putting a significant burden on the organisation. The process practically involved re-running the KYC almost entirely as the output of prior KYC and periodic review had been of poor quality, created manually and stored in a state where it was virtually impossible to be reused.

As a large European corporate bank, they faced some complex data challenges servicing a customer base across a number of jurisdictions, each of which have their own specific requirements around data gathering, validation and enrichment.

Solution

What the customer was looking for was a solution that could turbo-charge their Customer Lifecycle Management (CLM) platform, so customer profiles could be automatically enriched and refreshed on an ongoing basis using external data.

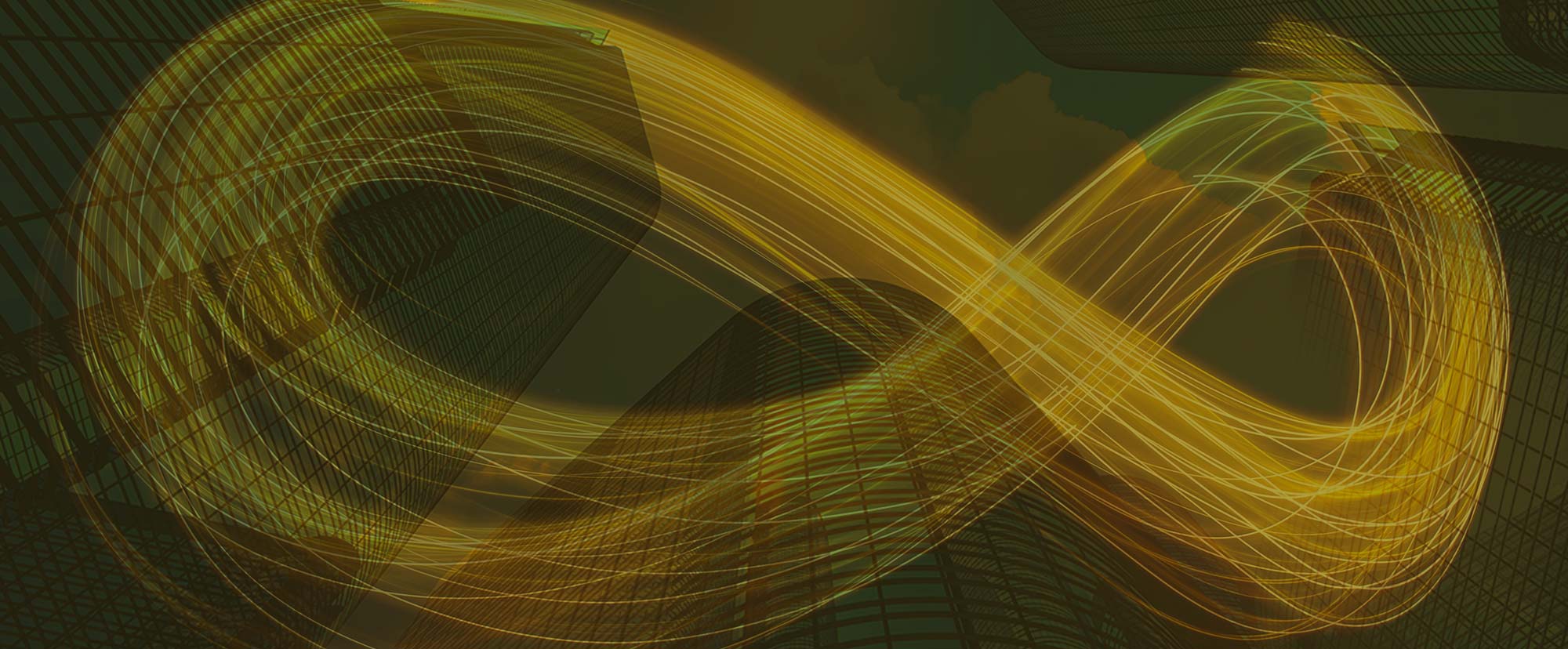

Data mapping

The first step to achieve this was to investigate the availability of internally held customer data as well as the data requirements for different jurisdictions, risk levels and entity types, to come up with a preferred entity schema.

These requirements were then fed into our source knowledge base which holds information on the data available from various external data sources. Finally, we mapped the entity schema to the data points available from each of the sources to determine an automated KYC data refresh strategy for each entity.

Automated entity data upload

The next step was to get each of the bank’s customers into a state from which perpetual KYC, ongoing refresh or event driven reviews can be triggered. The process involves the migration of previously manually collected profile information into a structured baseline profile which can be monitored against. The bank considered various options to do this migration.

First, they could hire a large pool of analysts or an outsourced team to pull information out of any customer databases, validate and enrich it manually and then store the data and the data provenance in a structured form. Alternatively, they explored using a technology solution which could help to do this work in a semi-automated way, enabling analysts to do the same work but then faster and cheaper in a controlled environment. However, while both these options are valid options for onboarding new-to-bank customers or doing ad hoc KYC refresh, it would have taken the bank several years to complete this exercise for all their 240,000 corporate customers.

STP enrichment

The conclusion was that the only solution to doing the migration without having to go through a considerable amount of pain was to do the work in batches, in a fully automated way where only exceptions would be processed manually.

The bank opted to use Arachnys Unified Search and our Entity API to automatically validate and enrich internally held customer data with external data to create an automatically created zero state KYC profile. This consisted of various fields which all connect to the most relevant and highest authoritative data source (also referred to as sources of hierarchy) for validating that particular data point.

As part of this exercise, not only were we able to create a high quality customer profile for a large share of the bank’s customers, we were also able to scan the entity for a number of critical risk factors such as watchlist risk, address risk, operational risk, adverse media risk, etc. and we were able to identify relationships between the bank’s customers, which they had been previously unaware of.

Triage and Investigation

We then triggered a semi-automated exception based triage process for only those entities which could not be auto-resolved or where our risk indicators had identified certain risk areas requiring human intervention. This typically involved a relatively simple task which we put in a queue for analysts to review. As these tasks typically only involved helping the system make a certain decision, the resulting file touch time was still minimal.

Only for entities that were identified to truly be high risk, did we have to trigger a more manual EDD or investigation process.

CLM integration

Part of the migration process was focused on seamlessly connecting with the bank’s CLM platform. For the bank, their CLM platform is the system of record. With Arachnys in place, the CLM becomes much smarter at automating tasks and dealing with exception based triggers. The CLM platform feeds Arachnys with customer provided data and informs Arachnys of any changes in a risk score. In return, Arachnys feeds the CLM with high quality data, and activates triggers when changes are detected.

What’s more, Arachnys also helps with data inheritance. It allows for the re-use of data attributes captured as part of another process, for example when KYC was conducted on a related entity whether it be a parent company, a subsidiary or a shared director.

Automated monitoring

Once fully migrated, the resulting profiles were ready for ongoing monitoring aimed at automatically detecting changes in the customers’ circumstances, whether these are entity changes (such as director or shareholder changes or a company opening a new branch office) or risk changes such as new sanctions, identification of new PEPs, new adverse media, a company going into liquidation, etc.

With all systems connected, we were in a position to start mapping out different monitoring policies for each of the entities. These monitoring policies were decided based on the entity’s risk profile, the materiality of detected changes in each of the captured data points (e.g. we might not be interested in a phone number change but we do want to be alerted when an entity’s registration is turned into dormant) and the cost of monitoring against the underlying data source.

Then when a material change is detected, whether within the CLM or through Arachnys, this becomes a trigger event which might either kick-off an automated process (eg, to populate the KYC profile for a new shareholder), push it into a queue of tasks for manual review by an analyst.

Results

We were able to get the bank’s entire book of customers into a monitorable state. Over 65% of the bank’s customers successfully migrated automatically and only a small number of entities required any significant manual intervention. Periodic review has been completely replaced by trigger based reviews and now only takes a fraction of the previous effort associated with manual KYC refresh. The total average file touch time for low and medium risk entities went down from 15 to 5 hours. If you multiply these savings by the number of entities being refreshed annually, one can see how we managed to save the bank a lot of money and significantly improve the quality of the output.

Finally, we allowed the bank to now follow a much more risk based approach, opposed to where every high risk entity had to be run through a manual EDD process – every year – and where every high risk event required manual intervention – every single time.

Learn more about Arachnys Perpetual KYC.